Trust on first use: The Achilles heel of centralised messengers

April 07, 2021 / Kee JefferysPrivacy, Security, Technical

We’re here to talk about TOFU, and no, we don’t mean soy-based protein. When we say TOFU, we’re talking about Trust On First Use. Whenever you send a message, you expect it to arrive at its intended destination without being re-routed to, read by, or responded to by anyone but the person (or people) you sent the message to. With some messengers (they know who they are), people now realise that’s not the case. Some companies and their apps can’t be trusted to keep your conversations private. But the good guys — the encrypted messaging apps like Session — need to guarantee your messages are arriving without being exposed or tampered with without placing trust in the server(s) or service provider powering the application. Obviously end-to-end encryption is a big part of the solution here, but it’s not a silver bullet for trust issues when it comes to private messengers.

The trust issue

Most messengers that require a phone number, email address, or username to sign up have a trust problem. Namely, you must fully trust the servers of these applications to correctly direct your messages to the intended recipient, as well as to give you the information required to correctly encrypt messages. To make matters worse, at any point during all conversations on messengers like this, your messages could be re-routed to a completely different person without you ever knowing.

Apps suffering from this trust issue include popular messengers like Signal, WhatsApp, Telegram, Facebook Messenger, and Threema.

TOFU: Trust on First Use

The issue with the above applications is that they rely on an authentication method called Trust on First Use (TOFU). The reason for this is fairly obvious — when you want to message someone on one of the these messengers (Signal, for example) you need to know two things before your first message can be sent: the recipient’s identifier, so you can tell Signal who you’re messaging,) and the recipient’s public key, so you can encrypt your message. In the case of Signal, the recipient’s long term public key and identity are the same, and this is referred to as the ‘identity key’. This means Signal’s servers must map between each user’s phone number (or other identifier) with their matching identity key.

This allows people with your identifier (in this case, your phone number) to encrypt a message to you without having to actually handle a public key — or even know what a public key is.

There are some legitimate reasons for this system. For example, if your phone breaks, the private part of your identity key will be lost — because it’s only stored on-device. But if you retain access to your phone number, Signal’s servers allow you to regenerate a new identity key and update the mapping on their server. This way, people can still encrypt and send messages using the same phone number.

Every application listed above follows some sort of similar behaviour, with a centralised server storing a mapping between your phone number or email address and an in-app identity/key which allows for the routing of messages.

Possible attacks

The problem here is clear: when using encrypted messaging applications, there’s an expectation that messages should be encrypted for the correct person and sent to the correct destination without having to trust the server.

Continuing with the Signal example, it’s possible Signal’s servers could give you the identity key of a completely different person than whoever you intended to message.

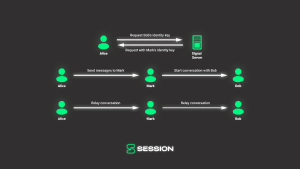

Scarier yet, if someone malicious gained root access to a messenger’s servers, they could insert a man-in-the-middle (MITM) into a conversation. For example, when Alice sends her first message to Bob using his phone number, the Signal servers could return Mark’s identity key instead of Bob’s. Mark can then request Bob’s identity key, impersonating Alice. When Alice sends a message to who she believes is Bob, the message is actually sent to Mark, who reads the contents then encrypts and passes the message onto Bob (and vice versa when Bob replies).

Potential solutions for trust on first use

Safety numbers

Signal, Whatsapp, and Wickr all use the ‘Safety Number’ construct. The idea behind safety numbers is that after you have added someone using their phone number, you can physically scan a QR code or confirm the safety numbers of your chat partner outside of the application. If the ‘Safety Numbers’ match on both devices, then you know for sure you’re talking to who you expect to be talking to — the person with that device.

This method works to protect users against man-in-the-middle attacks which are possible due to TOFU. The key issue with the safety number construct is that very few users actually verify their contacts’ safety numbers, and key changes happen regularly enough that people typically ignore them altogether.

A user study completed by USENIX investigated the real world usage of Signal safety numbers. In the study, 10 pairs of participants were asked to send a credit card number over Signal securely. To send the number securely, participants needed to verify their partners’ safety number. All 20 participants failed to properly authenticate their partners, meaning if the Signal server had been hacked or was acting dishonestly, all participants could’ve been connected to an entirely different user — potentially resulting in their credit card information being stolen or leaked.

The Ideal solution

Ideally, when you add a new contact on a messaging platform, you immediately know with full confidence you’re speaking to that person. There would be no server you had to trust to connect you with the right contact by keeping a mapping of their phone numbers, email address, or username and your public key.

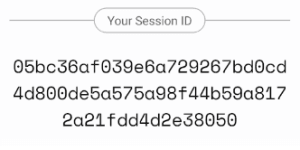

Applications like Session, Tox and Bitmessage achieve this by directly using the device’s or client’s public key as the network identifier and encryption key.

The Session model

Everyone on Session has a unique Session ID, and allows for offline backup of these Session ID’s (using a BIP-39 mnemonic). Whenever you want to message a new user, you use their Session ID. That Session ID provides both their swarm location as well as their encryption key. Again, as long as your friend gave you their correct Session ID, it’s impossible for you to encrypt a message for the wrong person, even if the service node network (Session’s servers) is dishonest.

The Tox model

Tox gives each user a Tox ID. When you add a new friend on Tox, you use their Tox ID to discover and encrypt your first message for them. Since there is no lookup for their encryption keys, it’s impossible for you to encrypt a message for the wrong person.

The Bitmessage model

Bitmessage uses identities which are encoded public keys. Bitmessage identities act as both an encryption key and a network identifier. When adding a new contact, as long as you have the correct Bitmessage identifier, you can be sure that your message is encrypted and sent to the correct individual.

Conclusion

Although most trusted messaging applications now employ end-to-end encryption, it’s important to understand how it’s implemented. Messaging applications which allow users to add new contacts using phone numbers, email addresses or usernames, are all employing a trust on first use model. This model opens up users to man-in-the-middle attacks during the initial resolution between a username, phone number, or email address and the cryptographic keys required to encrypt a message for the intended recipient.

Some messaging applications have attempted to resolve this issue by introducing the ‘Safety Number’ construct which requires users verify cryptographic keys outside of the messaging application. However, user studies have shown that the real world usage of this feature is low, and even those who do attempt to verify may still be vulnerable due to user error. This leaves many people open to attacks which could leak the contents of their conversations.

Session, Bitmessage and Tox, have taken their security model one step further — directly using cryptographic keys as a person’s identity in the app. This means every single message sent will always be encrypted for the specified users identity, removing the ability for dishonest servers to attack users.

A Personal Appeal From Cofounder of Session - Chris McCabe

March 18, 2026 / Chris McCabe

Rotating keys for Session repos

January 22, 2026 / Session

Session Pro Beta update: December 2025

December 07, 2025 / Session

Session Protocol V2: PFS, Post-Quantum and the Future of Private Messaging

December 01, 2025 / Session

Removing screenshot alerts from Session

November 09, 2025 / Session

Session Pro Beta Development Update: Progress and Community Insights

October 30, 2025 / Session